Long-running GPU-powered Jupyter notebooks

September 10, 2023

With AI/ML hype at an all time high. Many people are interested in experimenting with the latest models, learning how to train them, and learning how to deploy them. But, the main stumbling block for most of the nouveaux arrivants is getting their hands on an NVIDIA GPU. Essentially every ML library uses CUDA, an API for running parallel computations on graphics cards, which is available on every NVIDIA GPU. These days if you want an NVIDIA GPU, you have two options:

- Get a dedicated machine with an NVIDIA GPU installed (or install one yourself) to allow your ML library of choice to use GPU acceleration. This involves setting up and updating drivers and libraries to make sure your machine is compatible with whatever model/code you are trying.

- Use a cloud-based solution such as Colab, or Kaggle notebooks to do your experimentaion in the cloud.

| Having your own GPU | |

|---|---|

| Pros | Cons |

| No recurring costs, just pay for GPU once | Big upfront investment |

| No internet connection required | Need to use same (bulky) laptop wherever you want to work |

| Upgrading costs a lot more money, have to buy new card or machine | |

| Using a cloud notebook | |

|---|---|

| Pros | Cons |

| Generous free tiers usually | Generally requires paying money to do anything significant |

| Always up-to-date on drivers, library, and hardware | Must always be connected to the internet |

| Usable from any device | |

Both have their advantages and disadvantages. While having your own dedicated GPU might seem cheaper in the long-run, you may find that your machine quickly becomes outdated, and now you’re just stuck with an expensive computer that does not do its primary purpose well. It’s a problem I personally experienced when I purchased my System76 Gazelle a few years ago. Now it just feels sluggish compared to newer GPUs especially when working with language models. Not to mention the noise that the fan makes when the GPU kicks in, and the annoying process of install the proper libraries with conda, and keeping the NVIDIA drivers up-to-date.

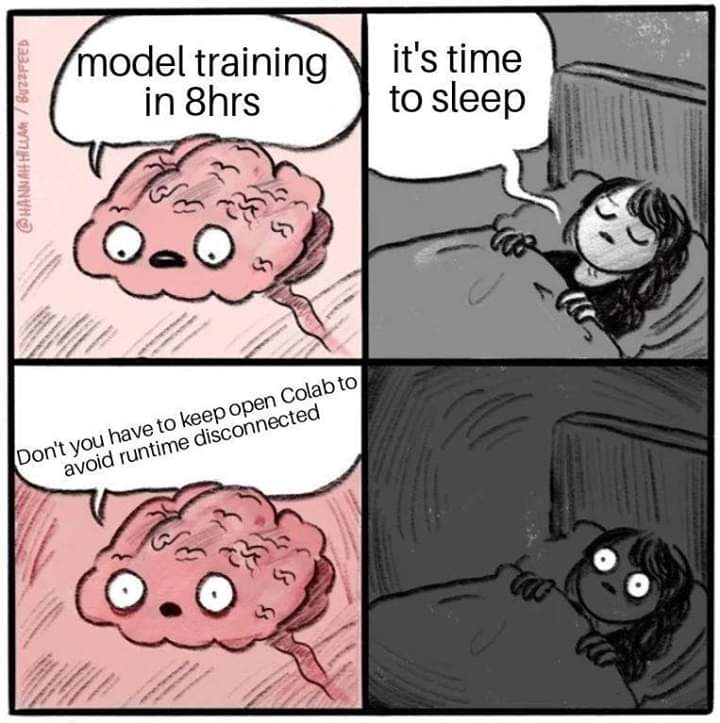

Cloud notebooks like Colab on the other hand remove the need to maintain drivers, hardware, and library installations. In addition, the free tier is usually generous enough for beginners to learn. However, you will almost certainly run into problems if you want to try training/fine-tuning language models. Given the fact that language models need to be able to predict the next word in a sequence of words, the matrices that they train are quite large, leading to long training times. You will soon discover with platforms like Colab that the notebook will disconnect long before your training is finished, but it’s not at all obvious from Googling what you can actually do to fix this scenario.

This is where Vertex AI notebooks from Google Cloud come in. Vertex AI lets you start up a compute instance with a GPU, and then run a Jupyter notebook connected to that instance. Because you have a dedicated instance, the notebook will just keep running. The notebook may still disconnect when your computer sleeps, because the websocket connection gets broken, but there are many solutions to simply keep your computer awake, such as the Caffeine/Caffeinated apps.

I would just start with the g2-standard-4 machine, which comes with one GPU. If that is not enough, then you can consider upgrading to the g2-standard-24 machine, which has two GPUs. For learning purposes, it is unlikely you will need more than that, but the g2-standard-48 and the a2-highgpu-* and a2-megagpu-* instances also exist. However, do keep in mind that the cost increases with the power and number of GPUs you utilize.

Let me know what you think about this article by leaving a comment below, reaching out to me on Twitter or sending me an email at pkukkapalli@gmail.com